In a classic experiment by psychologists Felix Warneken and Michael Tomasello on human social

Then something amazing happens:kid offers help. Having identified the purpose of the person, the baby goes to the closet and opens its doors, allowing the man to put his books inside. But how can a toddler with such limited life experience make such a conclusion?

Recently, computer scientists have redirected this question to computers: How can machines do the same?

A critical component to formsuch understanding are errors. Just as a toddler can only infer a person's goal based on his failures, the machines that determine a person's goals must take into account our faulty actions and plans.

In an effort to recreate this social intelligencein machines, researchers at the Massachusetts Institute of Technology's Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Department of Brain and Cognitive Sciences have created an algorithm capable of identifying goals and plans, even if those plans might fail.

This type of research can ultimately beused to improve a range of assistive technologies, collaboration or care robots, and digital assistants such as Siri and Alexa.

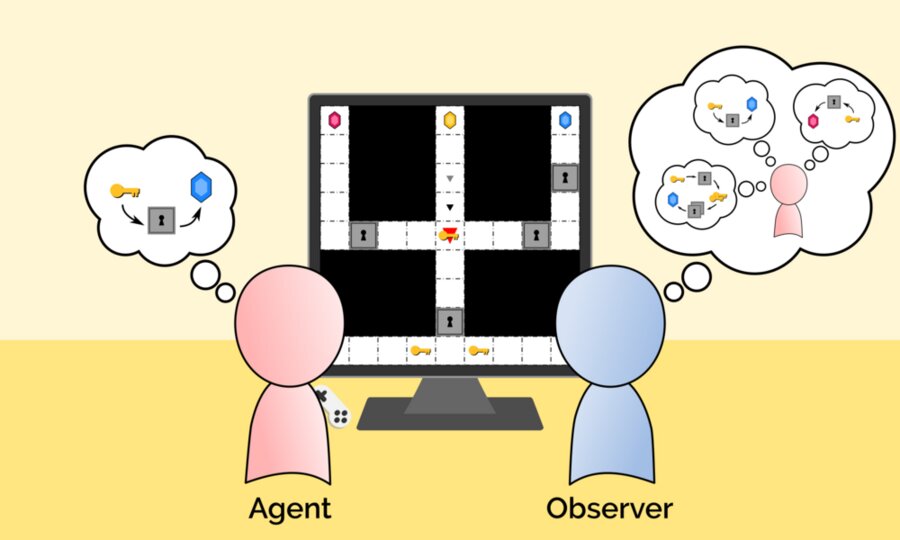

"Agent" and "Observer" demonstrate how newMIT's algorithm is capable of identifying goals and plans even if those plans might fail. Here the agent makes a faulty plan to reach the blue gem that the observer considers possible. Credit: Massachusetts Institute of Technology

"Agent" and "Observer" demonstrate how newMIT's algorithm is capable of identifying goals and plans even if those plans might fail. Here the agent makes a faulty plan to reach the blue gem that the observer considers possible. Credit: Massachusetts Institute of Technology

“This ability to account for errors can havecritical to building machines that reliably draw conclusions and act on our behalf, explains Tang Chih-Xuan, Ph.D., a student at the Massachusetts Institute of Technology (MIT) and lead author of a new research paper. "Otherwise, AI systems may mistakenly conclude that because we failed to achieve our higher-order goals, those goals were ultimately undesirable."

To create their model, the team usedGen, a new AI programming platform recently developed at MIT to combine symbolic AI planning with Bayesian inference. Bayesian inference provides an optimal way to combine uncertain beliefs with new data and is widely used for financial risk assessment, diagnostic testing, and election forecasting.

When creating the algorithm "Sequential searchreverse plan (SIPS) ”scientists have inspired a general way of human planning, which is largely sub-optimal. A person may not plan everything in advance, but rather form partial plans, implement them and, based on new results, make plans again. While it can lead to errors due to insufficient thinking "in advance", this type of thinking reduces the cognitive load.

Scientists hope their research will lay the groundworknew philosophical and conceptual frameworks needed to create machines that truly understand human goals, plans and values. The new basic approach of modeling humans as imperfect thinkers seems very promising to engineers.

Read also

20 new species of animals and plants found in the Andes

There are highways in space for fast travel. How will flights change?

Named a plant that is not afraid of climate change. It feeds a billion people