Space of random events

In 1946, the American statistician John Tukey proposed the name BIT

Imagine a space of random eventswhich consists of throwing one fake coin, on both sides of which is an eagle. When does an eagle fall? It is clear that always. We know this in advance, because our space is so arranged. The falling of an eagle is a reliable event, that is, its probability is equal to 1. Will we give much information if we say about a dropped eagle? Not. The amount of information in such a message, we will be considered equal to 0.

Now let's flip the fair coin:on one side it is heads, and on the other, tails, as it should be. Landing heads or tails will be two different events that make up our space of random events. If we report the outcome of one toss, it will indeed be new information. If heads are dropped, we will report 0, and if tails are 1. In order to report this information, we only need 1 bit.

What changed?Uncertainty has appeared in our event space. We have something to tell about it to someone who doesn’t throw a coin himself and doesn’t see the outcome of the toss. But to properly understand our message, he must know exactly what we are doing and what the 0s and 1s mean.Our event space must match, and the decoding process is uniquely restore the result of the throw.If the event space of the transmitter and receiver does not coincide or there is no possibility of unambiguous decoding of the message, the information will remain only noise in the communication channel.

If you throw two independently and simultaneouslycoins, then there will be four different equally probable results: heads-heads, heads-tails, tails-heads and tails-tails. To transmit information, we will need 2 bits, and our messages will be as follows: 00, 01, 10 and 11. There is twice as much information. This happened because uncertainty increased. If we try to guess the outcome of such a paired toss, we have twice the chance of being wrong.

The greater the uncertainty of the event space, the more information the message about its state contains.

Let's complicate our event space a little.So far, all the events that have happened have been equally probable. But in real spaces, not all events have equal probability. Let's say the probability that the crow we see will be black is close to 1. The probability that the first passerby we meet on the street will be a man is approximately 0.5. But meeting a crocodile on the streets of Moscow is almost impossible. Intuitively, we understand that a report about a meeting with a crocodile has much greater information value than about a black crow.The lower the probability of an event, the more information in the message about such an event.

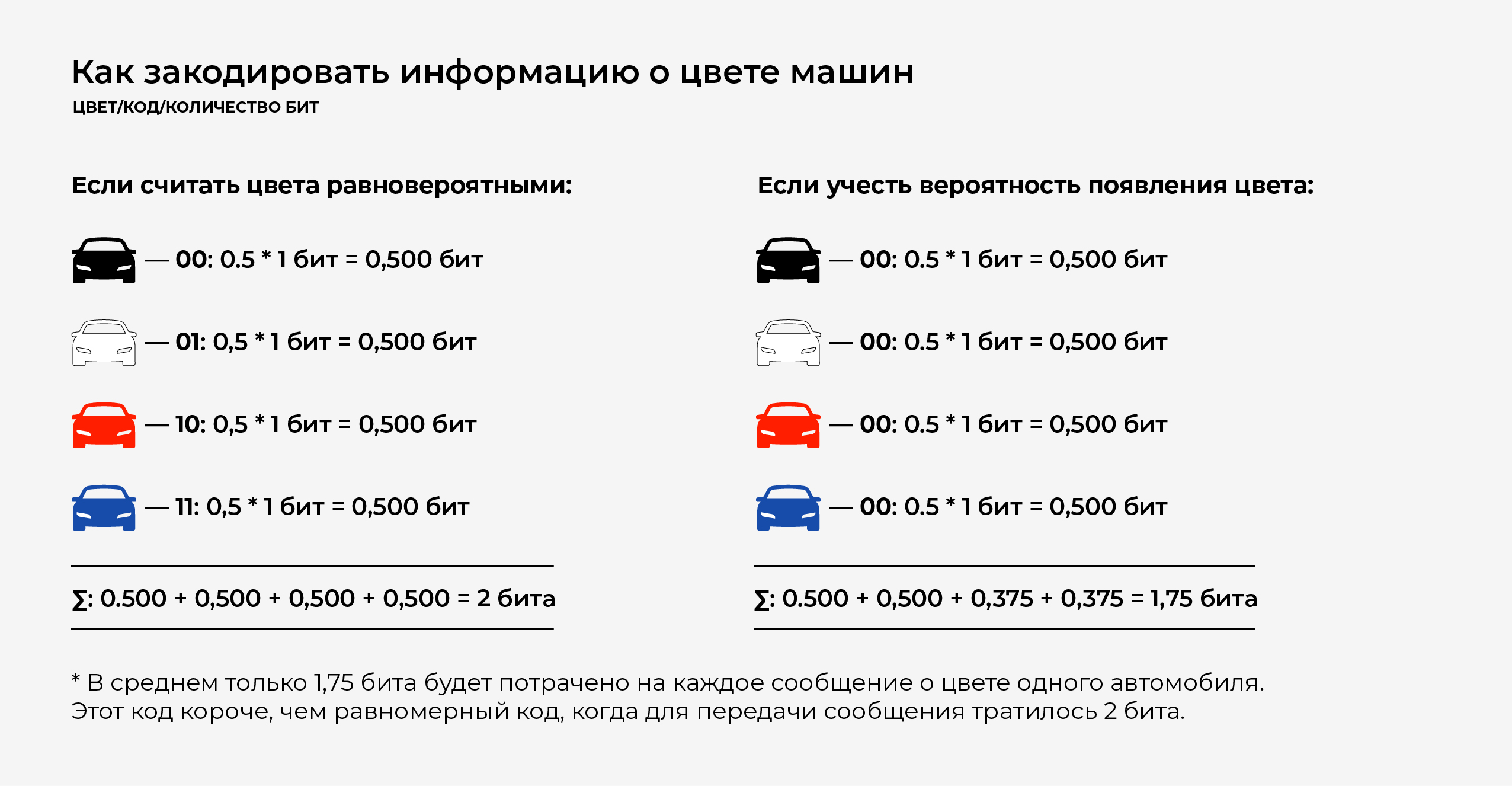

Let the event space not be so exotic. We just stand at the window and look at the passing cars. Cars passing by in four colors, which we need to tell. To do this, we encode the colors: black - 00, white - 01, red - 10, blue - 11. To report exactly which car drove, we just need to transfer 2 bits of information.

But for quite a long time watching the cars,We note that the color of cars is uneven: black - 50% (every second), white - 25% (every fourth), red and blue - 12.5% (every eighth). Then you can optimize the transmitted information.

Most of the cars are black, solet's denote black - 0 - the shortest code, and let the code of all the rest start at 1. Of the remaining half, white - 10, and the remaining colors start at 11. Finally, let's denote red - 110, and blue - 111.

Now, passing information about the color of the car, we can encode it more closely.

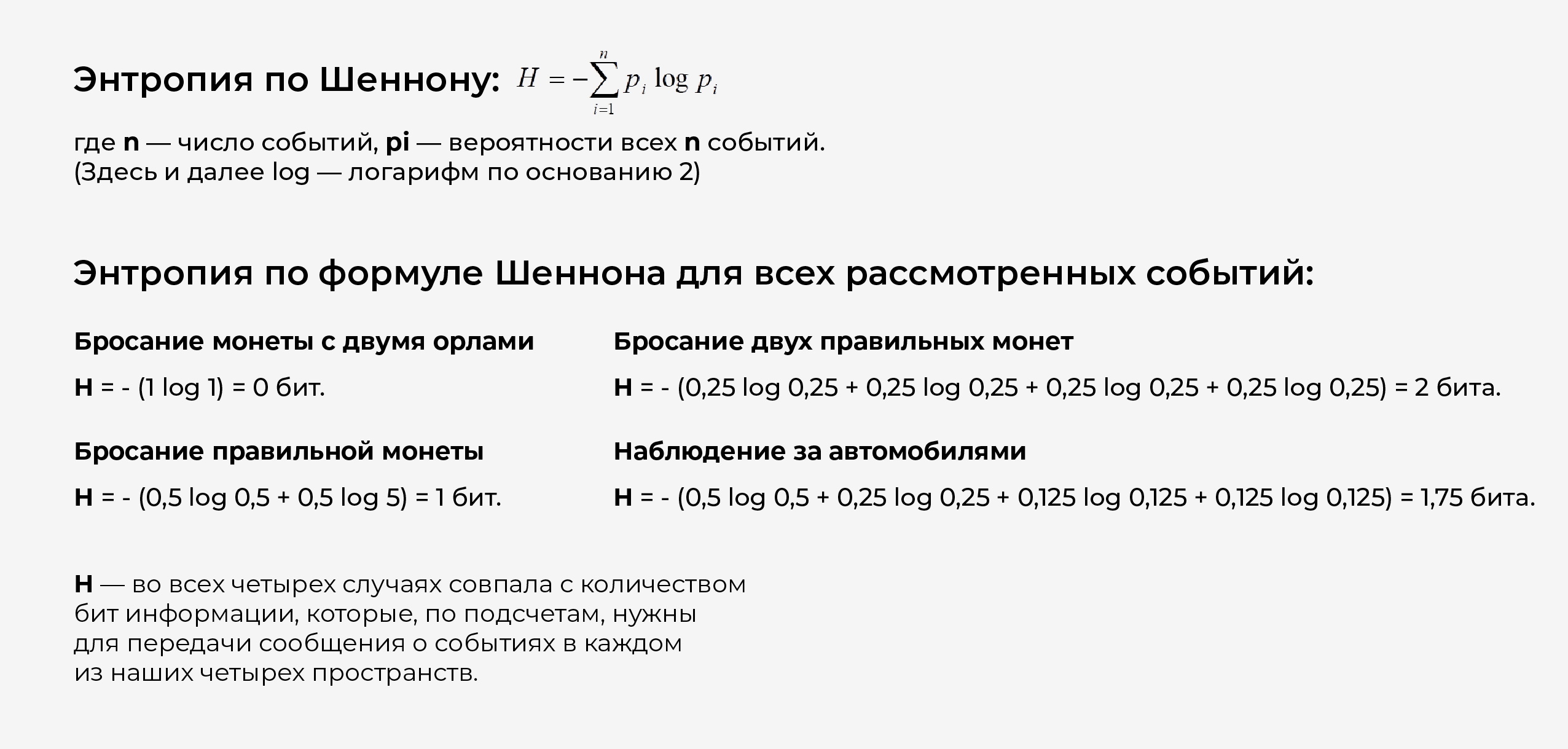

Shannon Entropy

Let our event space consist of ndifferent events. When tossing a coin with two heads there is exactly one such event, when tossing one fair coin there is exactly 2, when tossing two coins or watching cars there is exactly 4. Each event has a probability of its occurrence. When throwing a coin with two heads, there is one event (falling out heads) and its probability is p1 = 1. When throwing a fair coin, there are two events, they are equally probable and the probability of each is 0.5: p1 = 0.5, p2 = 0.5. When throwing two fair coins, there are four events, they are all equally probable and the probability of each is 0.25: p1 = 0.25, p2 = 0.25, p3 = 0.25, p4 = 0.25. When observing cars, there are four events, and they have different probabilities: black - 0.5, white - 0.25, red - 0.125, blue - 0.125: p1 = 0.5, p2 = 0.25, p3 = 0.125, p4 = 0.125.

This is not a coincidence.Shannon chose entropy (a measure of uncertainty in event space) so that three conditions were met:

- 1The entropy of a reliable event, the probability of which is 1, is equal to 0.

- The entropy of two independent events is equal to the sum of the entropies of these events.

- Entropy is maximal if all events are equally probable.

All these requirements are fully consistent with ourideas about the uncertainty of the event space. If there is only one event (the first example), there is no uncertainty. If the events are independent - the uncertainty of the sum is equal to the sum of the uncertainties - they simply add up (the example of throwing two coins). And finally, if all events are equally probable, then the degree of uncertainty of the system is maximum. As in the case of throwing two coins, all four events are equally probable and the entropy is 2, it is greater than in the case of cars, when there are also four events, but they have different probabilities - in this case the entropy is 1.75.

The quantity H plays a central role in information theory as a measure of information, choice, and uncertainty.

Claude Shannon

Claude Elwood Shannon- American engineer, cryptanalyst andmathematician. Considered the "father of the information age". Founder of information theory, which has found application in modern high-tech communication systems. Provided fundamental concepts, ideas and their mathematical formulations that currently form the basis for modern communication technologies.

In 1948, proposed to use the word "bit"to indicate the smallest unit of information. He also demonstrated that the entropy entered by him is equivalent to the uncertainty of the information in the transmitted message. Shannon's articles "Mathematical Theory of Communication" and "Theory of Communication in Secret Systems" are considered fundamental to information theory and cryptography.

During World War II, Shannon worked at Bell Laboratories to develop cryptographic systems, which later helped him discover error-correcting coding methods.

Shannon made a key contribution to the theory of probabilistic schemes, game theory, automaton theory and control system theory — fields of science that are part of the concept of cybernetics.

Coding

And thrown coins, and passing cars are notare similar to the numbers 0 and 1. To report events occurring in spaces, you need to think of a way to describe these events. This description is called coding.

Messages can be encoded in an infinite number of different ways. But Shannon showed that the shortest code cannot be smaller in bits than the entropy.

That is why the entropy of a message is a measureinformation in the message. Since in all the considered cases the number of bits during encoding is equal to entropy, this means that the encoding was optimal. In short, it is no longer possible to encode messages about events in our spaces.

With optimal coding, you cannot lose ordistort a single transmitted bit in the message. If even one bit is lost, the information will be distorted. But all real communication channels do not provide 100 percent confidence that all bits of the message will reach the recipient undistorted.

To fix this problem you need to dothe code is not optimal, but redundant. For example, transmit along with the message its checksum - a specially calculated value obtained when converting the message code, and which can be verified by recalculating upon receipt of the message. If the transmitted checksum matches the calculated one, the probability that the transmission was error-free will be quite high. And if the checksum does not match, then a retransmission must be requested. This is roughly how most communication channels work today, for example, when transmitting packets of information over the Internet.

Natural language messages

Consider the event space that isfrom posts in natural language. This is a special case, but one of the most important. Events here will be the transmitted characters (letters of the fixed alphabet). These characters are found in the language with different probabilities.

The most frequency symbol (i.e. one thatis most often found in all texts written in Russian) is a space: of a thousand characters, an average space is found 175 times. The second in frequency is the symbol "o" - 90, followed by other vowels: "e" (or "e" - we will not distinguish them) - 72, "a" - 62, "and" - 62, and only further meets the first consonant "t" - 53. And the rarest "f" - this symbol is found only twice per thousand characters.

We will use the 31-letter alphabet of Russianlanguage (it does not differ "e" and "e", as well as "ъ" and "ь"). If all the letters were encountered in the language with the same probability, then the entropy per symbol would be H = 5 bits, but if we take into account the real frequencies of the symbols, the entropy will be less: H = 4.35 bits. (This is almost two times less than with traditional coding, when a character is transmitted as a byte - 8 bits).

But the entropy of the character in the language is even lower. The probability of occurrence of the next character is not completely predetermined by the average frequency of the character in all texts. Which character will follow depends on the characters already transferred. For example, in modern Russian after the symbol "ъ" can not follow the symbol of a consonant sound. After two consecutive vowels "e", the third vowel "e" follows extremely rarely, except in the word "long-necked". That is, the next character is to some extent predetermined. If we take into account such predetermination of the next symbol, the uncertainty (that is, information) of the next symbol will be even less than 4.35. According to some estimates, the next character in Russian is predetermined by the structure of the language by more than 50%, that is, with optimal coding, all information can be transmitted by deleting half of the letters from the message.

Another thing is that not every letter can be crossed out painlessly. High-frequency "o" (and generally vowels), for example, is easy to cross out, but rare "f" or "e" is quite problematic.

The natural language in which we communicate with each other is highly redundant, and therefore reliable; if we misheard something, it’s okay, the information will still be transmitted.

But until Shannon introduced the measure of information, we could not understand that the language was redundant, and to what extent we could compress the messages (and why text files are so well compressed by the archiver).

Natural language redundancy

In the article "About how we vorpsimanie tektkt"(the name sounds exactly like this!) a fragment of Ivan Turgenev's Noble Nest novel was taken and subjected to some transformation: 34% of letters were deleted from the fragment, but not random ones. The first and last letters in words were left, only vowels were crossed out, and not all. The goal was not only to get the opportunity to recover all the information on the converted text, but also to ensure that the person reading this text did not experience any particular difficulties due to missing letters.

Why is it relatively easy to read this corruptedtext? It actually contains the necessary information to reconstruct entire words. A native speaker of Russian has a certain set of events (words and entire sentences) that he uses in recognition. In addition, the speaker also has standard language structures at his disposal that help him recover information. For example,"She is blae blee"- with a high probability can be read as"She was more sensitive.". But taken separately"She's more blah", rather, will be restored as“She was whiter”. Since in everyday communication we dealwith channels in which there is noise and interference, we are quite good at restoring information, but only that which we already know in advance. For example, the phrase"Her features are not in the least pleasant, htya nanny rspkhli and zlls"reads well except for the last word"Splash" - "rallied". This word is not in the modern lexicon. When reading a word quickly"Splash"reads more like “stuck together”; when slow, it simply baffles.

Signal Digitization

Sound, or acoustic oscillations - this is a sine wave. This is seen, for example, on the sound editor screen. To accurately transmit the sound, you will need an infinite number of values - the entire sine wave. This is possible with an analog connection. He sings - you listen, the contact is not interrupted while the song lasts.

In digital communication over a channel, we can only transmit a finite number of values. Does this mean that the sound cannot be reproduced accurately? It turns out not.

Different sounds are a differently modulated sine wave.We transmit only discrete values (frequencies and amplitudes), but we don’t need to transmit the sinusoid itself - the receiving device can generate it.It generates a sinusoid, and is superimposed on itmodulation created from values transmitted over a communication channel. There are exact principles of which discrete values must be transmitted so that the sound at the input to the communication channel coincides with the sound at the output, where these values are superimposed on some standard sinusoid (this is what Kotelnikov’s theorem is about).

Kotelnikov's theorem (in the English-language literature - the Nyquist-Shannon theorem, the reading theorem)- a fundamental statement in the field of digitalsignal processing, connecting continuous and discrete signals and stating that “any function F(t), consisting of frequencies from 0 to f1, can be continuously transmitted with any accuracy using numbers following each other through 1/(2*f1) seconds

Anti-interference coding. Hamming codes

If on an unreliable channel to transmitIvan Turgenev's coded text, albeit with some errors, will result in a quite meaningful text. But if we need to transfer everything up to a bit, the task will be unresolved: we do not know which bits are wrong, because the error is random. Even the checksum does not always save.

That is why today when transmitting data onnetworks tend not so much to optimal coding, in which the maximum amount of information can be pushed into the channel, but rather to such coding (obviously redundant) in which errors can be recovered - just like when we read the words in Ivan Turgenev's fragment.

There are special error-correcting codes that allow you to recover information after a failure. One of them is Hamming code.Let's say our entire language consists of three words:111000, 001110, 100011. Both the source of the message and the receiver know these words. And we know that errors occur in a communication channel, but when transmitting one word, no more than one bit of information is distorted.

Suppose we first pass the word 111000. As a result, no more than one error (we have identified the error) it can turn into one of the words:

1) 111000,011,000, 101000, 110000, 111100, 111010, 111001.

When you send the word 001110, you can get any of the words:

2) 001110,101110, 011110, 000110, 001010, 001100, 001111.

Finally, for 100011 we can get at the reception:

3) 100011,000011, 110011, 101011, 100111, 100001, 100010.

Note that all three lists are not pairwise.intersect. In other words, if at the other end of the communication channel any word from list 1 appears, the recipient knows for sure that the word 111000 was transmitted to him, and if any word from list 2 appears, the word 001110, and from list 3, the word 100011 appears. In this case They say that our code fixed one error.

The correction was due to two factors.First, the recipient knows the whole “dictionary”, i.e. the event space of the message recipient coincides with the space of the one who transmitted the message. When the code was transmitted with only one error, a word came out, which was not in the dictionary.

Secondly, the words in the dictionary were chosen in a special way.Even if an error occurred, the recipient could notconfuse one word with another. For example, if the dictionary consists of the words “daughter”, “dot”, “bump”, and during transmission the result was “vochka”, then the recipient, knowing that such a word does not exist, would not be able to correct the error - any of the three words may turn out to be correct. If the dictionary includes “dot”, “daw”, “branch” and we know that no more than one mistake is allowed, then “vochka” is definitely a “dot” and not a “daw”. In error-correcting codes, words are chosen precisely so that they are “recognizable” even after an error. The only difference is that the code “alphabet” has only two letters - zero and one.

The redundancy of such coding is very large, and the number of words that we can thus convey is relatively small.We need to exclude any word from the dictionary,which, in case of an error, may coincide with the entire list corresponding to the transmitted words (for example, the words “daughter” and “dot” cannot be in the dictionary). But accurate message transmission is so important that great effort is spent on research into error-resistant codes.

Sensation

Concepts of entropy (or uncertainty andunpredictability) of the message and redundancy (or predetermination and predictability) very naturally correspond to our intuitive ideas about the measure of information. The more unpredictable the message (the greater its entropy, because there is less likelihood), the more information it carries. A sensation (for example, a meeting with a crocodile on Tverskaya) is a rare event, its predictability is very low, and therefore the information value is high. Often information is called news — reports of events that have just occurred, about which we still know nothing. But if they tell us about the second and third times about the same words, the redundancy of the message will be great, its unpredictability will drop to zero, and we simply will not listen, dismissing the speaker with the words "I know, I know." Therefore, the media are trying so hard to be the first. This correspondence to the intuitive feeling of novelty, which gives rise to really unexpected news, played a major role in the fact that Shannon’s article, which was not intended for the general reader, became a sensation, which the press picked up as a universal key to the knowledge of nature. - from linguists and literary critics to biologists.

ButShannon information concept - rigorous mathematical theory, and its application outside of communication theory is very unreliable. But in the theory of communication itself, it plays a central role.

Semantic information

Shannon, introducing the concept of entropy as a measureinformation, got the opportunity to work with information - first of all, to measure it and evaluate characteristics such as channel capacity or optimal coding. But the main assumption that allowed Shannon to successfully operate with information was the assumption that the generation of information is a random process that can be successfully described in terms of probability theory.If the process is non-random, that is, it obeys the laws (moreover, it is not always clear, as it happens in natural language), then Shannon's reasoning is not applicable to it.Nothing Shannon says has anything to do with the information being meaningful.

While we are talking about characters (or letters of the alphabet),we can well argue in terms of random events, but as soon as we get to the words of the language, the situation will change dramatically. Speech is a process that is specially organized, and here the structure of the message is no less important than the characters by which it is transmitted.

Just recently it seemed like we couldn't do anything.done to get at least somehow closer to measuring the meaningfulness of the text, but in recent years the situation has begun to change. And this is primarily due to the use of artificial neural networks for the tasks of machine translation, automatic summarization of texts, extracting information from texts, and generating reports in natural language. All of these tasks involve transforming, encoding, and decoding meaningful information contained in natural language. And gradually an idea is formed about information losses during such transformations, and therefore about the extent of meaningful information. But today, the clarity and accuracy that Shannon’s information theory has is not yet available in these difficult problems.