Facebook will use computer vision and natural language processing systems that are already

The robot is planned to be completely autonomous.and self-learning — systems must learn directly from the raw data. This will allow the device to more quickly adapt to new challenges and change circumstances, the company believes. The basis of artificial intelligence will be learning based on the RL model, which will allow robots to learn independently through trial and error.

We would like to teach the robot to walk without help. Movement is a very difficult task in robotics, and this makes it very exciting, from our point of view.

Facebook Research Developer Roberto Calandra

A distinctive feature of the robot from Facebookis that the device will not be implemented algorithms for its movement. Initially, he cannot walk, however, gradually using the learning algorithm, he begins to interact with his controllers, which can already be activated for movement. The more experience a robot gets, the better it works.

In this case, the robot must not onlydetermine your location and orientation in space, but also keep the balance and connect the impulses of the sensors with each other for the correct operation of complex mechanisms such as the knee.

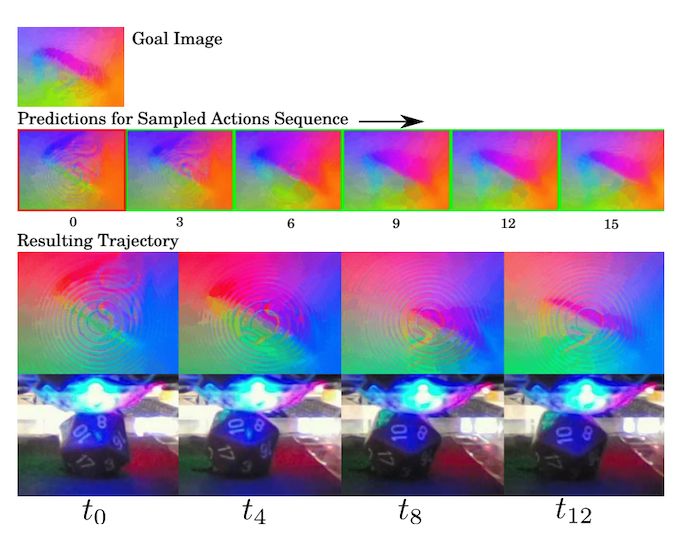

Robots from Facebook for computer visionuse one of the algorithms that were developed to predict the popularity of videos. A neural network can analyze several seconds of video and predict further frames even without viewing to speed up the analysis of a huge amount of material.

As part of the Facebook Research experimentintroduced the first device - a manipulator that can work with a joystick, roll a cube with 20 facets and correctly understand the results that fell in one second or another.

Combining visual and tactile information sources can improve learning methods and functionality of future self-learning platforms, according to Facebook.

According to the developers, now similarprojects use only one type (maximum two) of information, whereas for robotic devices to fully operate they must perceive information from different senses.